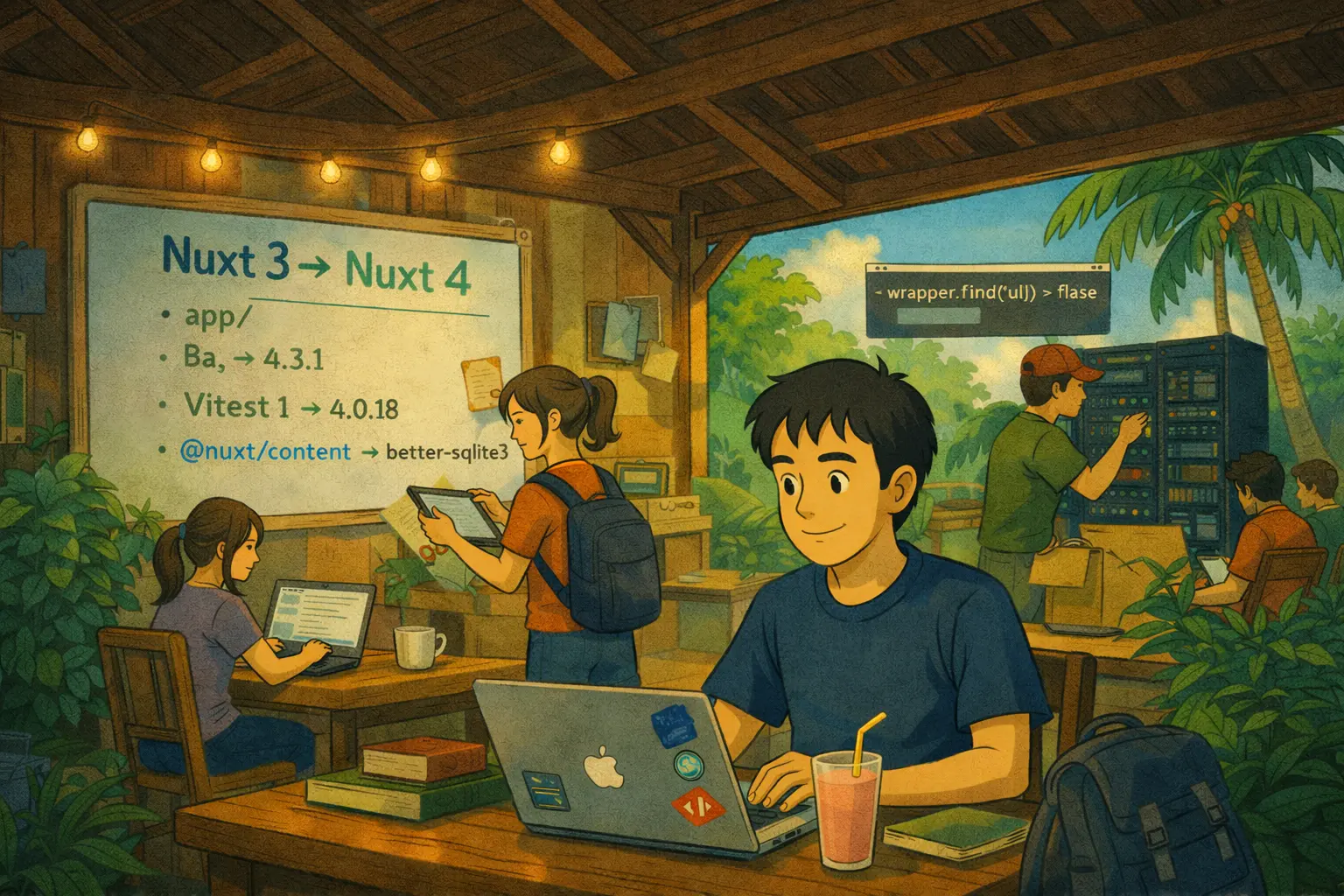

AI Agent Upgraded My Codebase to Nuxt 4

From Nuxt 3 to Nuxt 4 in two hours. 45 passing tests. Zero manual edits. Here's what agentic coding actually looks like in the wild.

"Be specific" is the most common advice for prompt engineering. It's true but not very useful - it's the equivalent of "write good code" as debugging advice.

After a year of building tools that use LLMs to generate production code, here are the techniques that actually improved output quality in measurable ways.

Most prompts describe the task first and mention the output format at the end. Reverse this. Describe the exact output format first - file structure, function signatures, return types - then describe what the code should do.

Why it works: LLMs generate token by token. When the output format is established early, every subsequent generation is constrained by it. The model spends less "attention" on figuring out how to structure the output and more on getting the logic right.

# Less effective

Write a TypeScript function that validates email addresses using regex

and returns true/false. The function should handle edge cases like

missing @ symbol and invalid domains. Return the function as a clean

TypeScript snippet.

# More effective

The output should be exactly this TypeScript function signature:

export function validateEmail(email: string): boolean

Now implement this function so that it handles: missing @ symbol,

invalid TLD, consecutive dots, and empty strings.

When you describe behavior in prose, the LLM has to infer the exact semantics. When you provide a failing test, the semantics are unambiguous.

// Include this in your prompt

test('handles rate-limited responses', async () => {

mockFetch.mockRejectedValueOnce(new Response(null, { status: 429 }))

mockFetch.mockResolvedValueOnce(new Response(JSON.stringify({ data: 'ok' })))

const result = await fetchWithRetry(url)

expect(result.data).toBe('ok')

expect(mockFetch).toHaveBeenCalledTimes(2)

})

The LLM now knows exactly what "handles rate-limited responses" means in executable terms. The generated code can be directly tested against the provided case.

If you're asking for a function that will live inside a larger file, include the surrounding code. The LLM needs to understand:

Prompts that include "here's the surrounding context, write the missing function" consistently outperform prompts that just describe what the function should do.

For complex algorithms or multi-step transformations, add a self-critique step:

Write the implementation, then review it for: edge cases, off-by-one

errors, and places where the logic might fail for empty inputs.

After the review, write the corrected version.

This roughly replicates the test-then-fix loop that a human developer would do. The models are good at spotting their own bugs when explicitly prompted to look for them - they just don't do it automatically.

For novel or complex implementations, run two passes:

The design pass surfaces assumptions and edge cases before code is written. It's the equivalent of talking through an implementation with a colleague before sitting down to code.

These aren't theoretical - each came from observing where generated code failed and working back to what was missing from the prompt. The pattern across all of them: LLMs generate better code when you give them less room to make structural decisions and more room to focus on logic.

From Nuxt 3 to Nuxt 4 in two hours. 45 passing tests. Zero manual edits. Here's what agentic coding actually looks like in the wild.

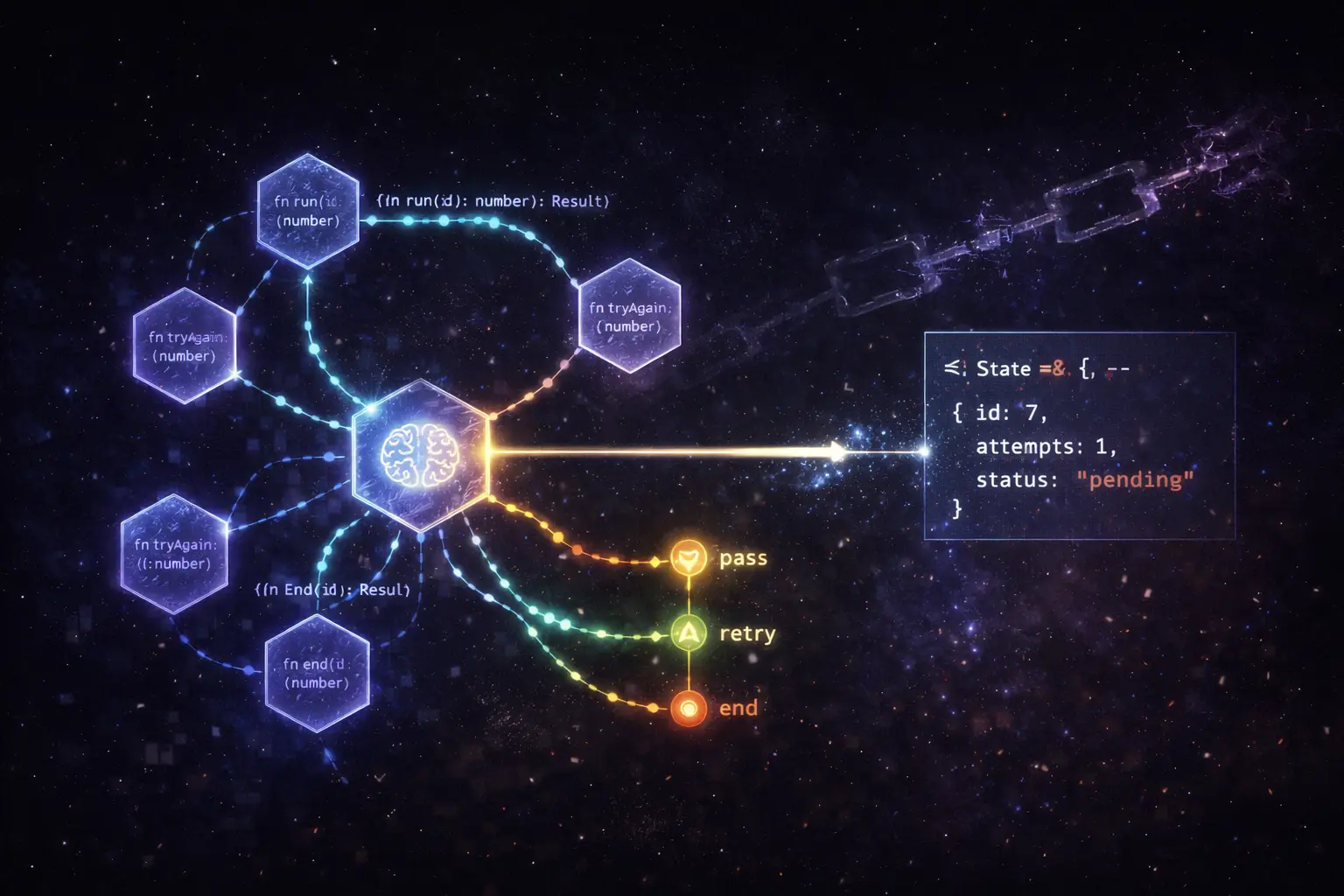

LangChain is great for chains. LangGraph is great for agents. I rebuilt a document-processing pipeline as a graph and the difference in debuggability was immediately obvious.

A look into how a freelance full-stack engineer brings value, speed, and flexibility to modern software teams.